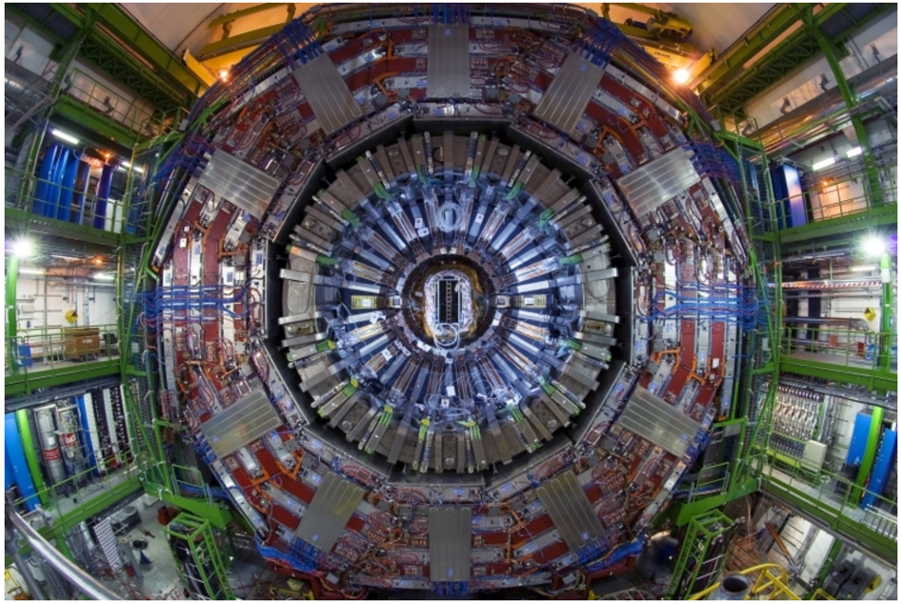

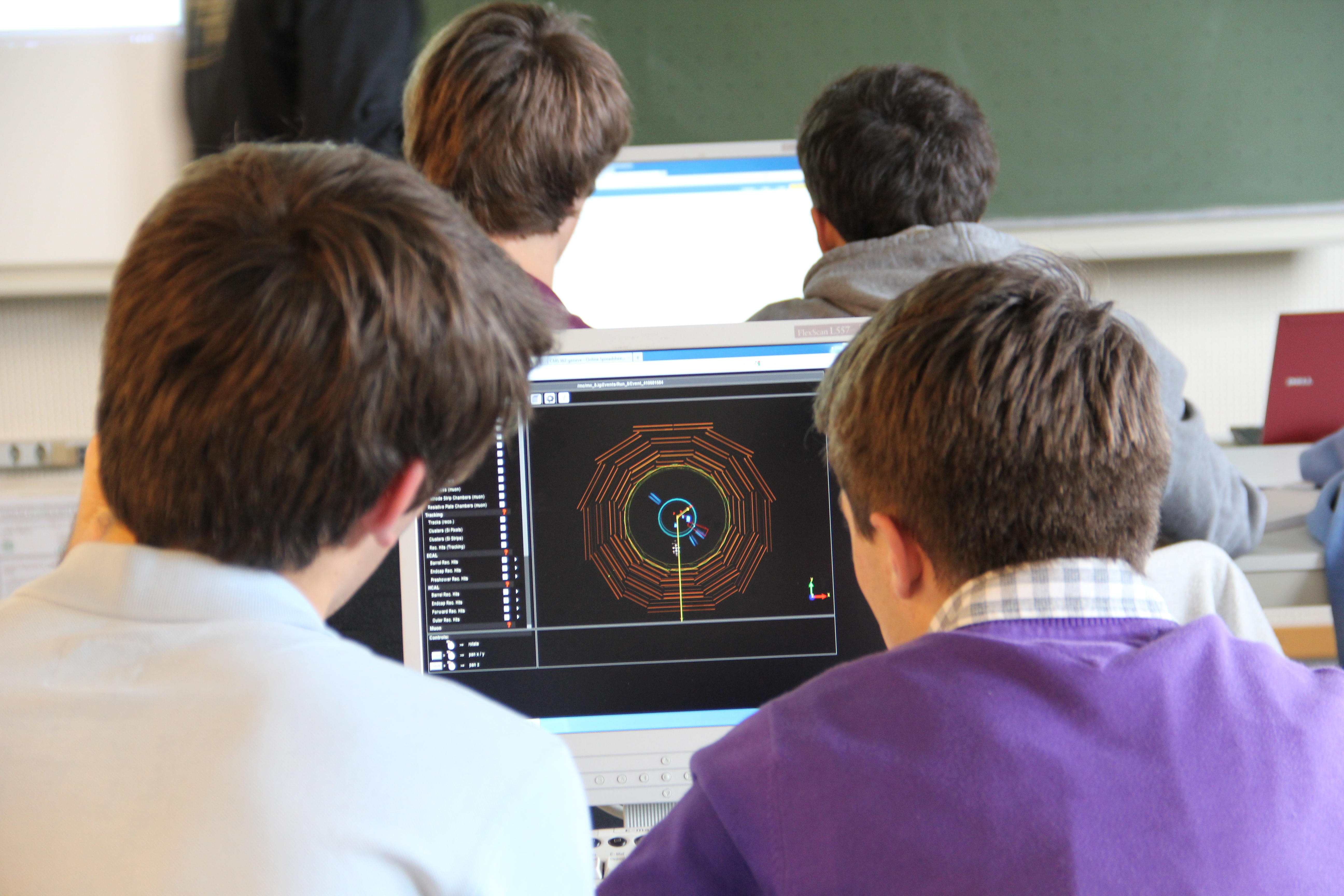

The Compact Muon Solenoid (CMS) Collaboration at CERN is excited to announce the public release of the first batch of high-level, analysable and open data from the Large Hadron Collider (LHC), recorded by the CMS detector. The datasets are available on the new CERN Open Data Portal and are being released into the public domain under the Creative Commons CC0 waiver, in keeping with CMS’s commitment to data preservation and open data. “We have a duty to society to do so,” says Tiziano Camporesi, CMS Spokesperson. “The scientific knowledge we produce is for everyone to share and we hope educational tools built on top of our data will inspire the next generation of scientists.” The first batch corresponds to approximately half of the total data volume recorded in 2010, the first year of LHC operations. While the data are in a processed format that is good for analysis, they are still quite complex and performing an analysis using these data is difficult: it takes CMS scientists working in groups and relying upon each others’ expertise many months or even years to perform a single analysis that must then be scrutinised by the whole collaboration before a scientific paper can be published. A first-time analysis typically takes about a year from start of preparation to publication, not taking into account the six months it takes newcomers to learn the analysis software. Acknowledging these challenges, CMS is providing basic documentation to accompany the data in the form of some simple (open-source) analysis examples for users to familiarise themselves with the CMS analysis environment and get started with using the data. In addition to these, the portal also hosts simplified examples of analysis that can be used in a classroom environment, for both the high-school and the university level, as well as example applications that developers can learn from and build upon. Since the collaboration’s experts are devoted to new physics analyses, CMS has limited resources — provided on a voluntary basis — for additional support. Data samples and analysis tools used in the international Physics Masterclass exercise developed by QuarkNet and the CMS e-Lab run by I2U2 are suited to the high-school classroom setting. CMS collaborators have also developed applications for university students with CMS open data: the HEP Tutorial by Christian Sander and Alexander Schmidt of Hamburg University provides an introduction to particle physics through an analysis of top quarks; the web-based VISPA tool developed at RWTH Aachen University is used by third-year undergraduate students to perform simple CMS analyses, such as calculating the mass of particles produced at the LHC. “Several people have invested time and effort in bringing us this far,” adds Kati Lassila-Perini, CMS Data Preservation and Open Data coordinator, “but we know that this is just the beginning. The first of our open data are now available for the whole world to enjoy, and we look forward to hearing from developers and educators on how they are used!” CMS would also like to thank the following in particular for their efforts in bringing our open data and tools to the portal: CERN’s IT Department, Scientific Information Service and PH-SFT group; Tom McCauley from the University of Notre Dame, USA; Adam Huffman and David Colling from Imperial College London, UK; Ana Rodríguez Marrero, Alicia Calderón Tazón and Jesús Marco from IFCA, CSIC-University of Cantabria, Spain; all the CMS Physics Object Group (POG) conveners; Andreas Pfeiffer and the CMS AlCaDB team; the QuarkNet programme, USA; and the Lapland University of Applied Sciences, Finland.

Data and analysis tools

The following are provided through the portal:

- Downloadable datasets

- Primary datasets: full reconstructed data with no other selections. The data here are referred to as “reconstructed data”: fragmented data from various sub-detectors are processed or “reconstructed” to provide coherent information about individual physics objects such as electrons or particle jets

- Examples of simplified datasets derived from the primary ones for use in different applications and analyses

- Tools

- A downloadable Virtual Machine (VM) image with the CMS software environment through which the primary datasets can be accessed

- An analysis example chain, reading the primary dataset and producing intermediate derived data for the final analysis

- Ready-to-use online applications, such as an event display and simple histogramming software

- Source code for the various examples and applications, available in the CMS Tools collection

Primary datasets

- Data in the primary datasets are in a format known as AOD or Analysis Object Data.

- AOD files contain the information that is needed for analysis:

- all the high-level physics objects (such as muons, electrons, etc.);

- tracks with associated hits, calorimetric clusters with associated hits, vertices; and

- information about event selection (triggers), data needed for further selection and identification criteria for the physics objects.

- An AOD file is not the final event interpretation with a simple list of particles.

- It contains several instances of the same physics object (i.e. a jet reconstructed with different algorithms).

- It may have double-counting (i.e. a physics object may appear as a single object of its own type, but it may also be part of a jet).

- Additional knowledge is needed to define a “good” physics object.

- Definition of same objects is different in each analysis.

- AOD files can be read in ROOT, but they cannot be opened (and understood) as simple data tables.

- Only the runs that are validated by data quality monitoring should be used in any analysis. The list of the validated runs is provided.

Disclaimer

- The open data are released under the Creative Commons (CC0) waiver. Neither CMS or nor CERN endorse any works, scientific or otherwise, produced using these data.

- All datasets will have a unique DOI that you are requested to cite in any applications or publications.

- Despite being processed, the high-level primary datasets remain complex and selection criteria need to be applied in order to analyse them, requiring some understanding of particle physics and detector functioning.

- The data cannot be viewed in simple data tables for spreadsheet-based analyses.

- No further development is foreseen for either the data released or the software version needed to analyse them.

- CMS had just started data taking in 2010 and the methods have evolved since those early days, in particular to respond to the evolving beam conditions of the LHC.

- More advanced techniques are used with recent data but the software is not compatible out-of-the-box with older data samples.

- The release of 2010 data is not accompanied by any simulated data. Future releases may include this information.

- If you are interested in more, please contact nearest CMS university/institute.

Other CMS open data

- All CMS publications are open access.

- Some of the papers also include open data in the form of additional tables, plots, graphs and Rivet packages.

Policies

- Log in to post comments