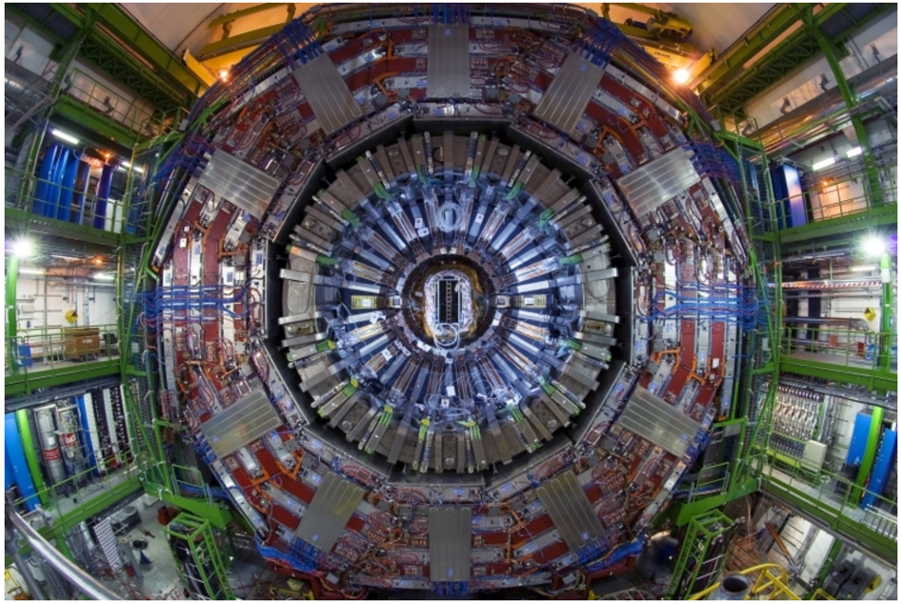

Managing the amount of data from the Large Hadron Collider (LHC) collisions is a major challenge for CMS physicists. The detector produces more than 500 terabytes of data per second. Even after sophisticated real-time processing and filtering of the collision events online within a fraction of a second, tens of petabytes of data a year are saved offline for further analysis. This process will become even more challenging in the future when the LHC collision rate will increase up to a factor of five (High-Luminosity LHC). To meet this grand challenge, CMS physicists are using state-of-the-art machine learning methods at every stage of the data processing, to improve the experiment. From real-time filtering to offline data analysis, they are using machine learning to improve physics performance, accelerate computations, improve data quality, and optimize searches for new physics signatures.

The very first step of processing the CMS data requires selecting only the most interesting of the 40 million collisions per second to reduce the storage space needed. To achieve this real-time data reduction, algorithms for the first step of filtering must be performed in hundreds of nanoseconds! For this task, algorithms are implemented in custom electronics using “Field Programmable Gate Arrays”. Even in such extreme environments, CMS scientists have been using machine learning techniques such as boosted decision trees and, more recently, modern deep neural networks in a pilot project, hls4ml (High Level Synthesis Language for Machine Learning), led by CMS researchers.

Further, machine learning is being used to modernize both the daily operations of the CMS simulation and data processing chain and improve the data analysis and physics results.

Innovative ideas, based on supervised and unsupervised learning, have been studied for real-time anomaly detection in CMS data to ensure data quality, monitor the detector, and even search for new physics.

The CMS scientific computing group is using machine learning algorithms to predict dataset usage to optimize storage and retrieval of data. CMS also pioneered integration of deep learning frameworks into its core software stack, allowing the use of trained models for analysis and reconstruction.

The generation of simulated collision events to compare with real data is another essential and computationally intensive task for CMS. Generative models have been used recently to produce very realistic images of people and objects. CMS scientists are studying the potential of these methods to drastically reduce the time it takes to perform simulations.

To improve the scientific results of CMS, machine learning applications are being applied to the reconstruction of the raw detector data into physics objects and have achieved state-of-the art results such as heavy and light quark flavour tagging, jet identification and estimation of particle properties with regression techniques. For example, the efficiency improvement in identifying sprays of particles from bottom quarks (heavy flavour tagging) is equivalent to running the LHC for twice as long! CMS physicists are also exploring cutting edge geometric deep learning methods to more efficiently represent and process their data.

Today, many new CMS publications rely on machine learning techniques for object identification and event classification to reduce the copious background processes which contaminate signals of interest. Recent examples include CMS’s observations of the Higgs decaying into bottom quarks, associated Higgs production with top quarks, searches for rare Higgs decays into di-muons, various SUSY (Super-Symmetry) searches and many others.

As CMS enters the two-year shutdown and prepares for the Run 3 of the LHC, the collaboration will have the opportunity to implement and further explore exciting machine learning methods which will continue to revolutionize the physics of the CMS experiment.

-------------------------------

For CMS members:

For more information on CMS Machine Learning Forum please sign up to the HyperNews: hn-cms-machine-learning@cern.ch and see the CMS ML Forum Twiki page: https://twiki.cern.ch/twiki/bin/viewauth/CMS/CmsMLForum

- Log in to post comments